Seeing Like a Newsfeed

Machine Learning Through Machine Poetry

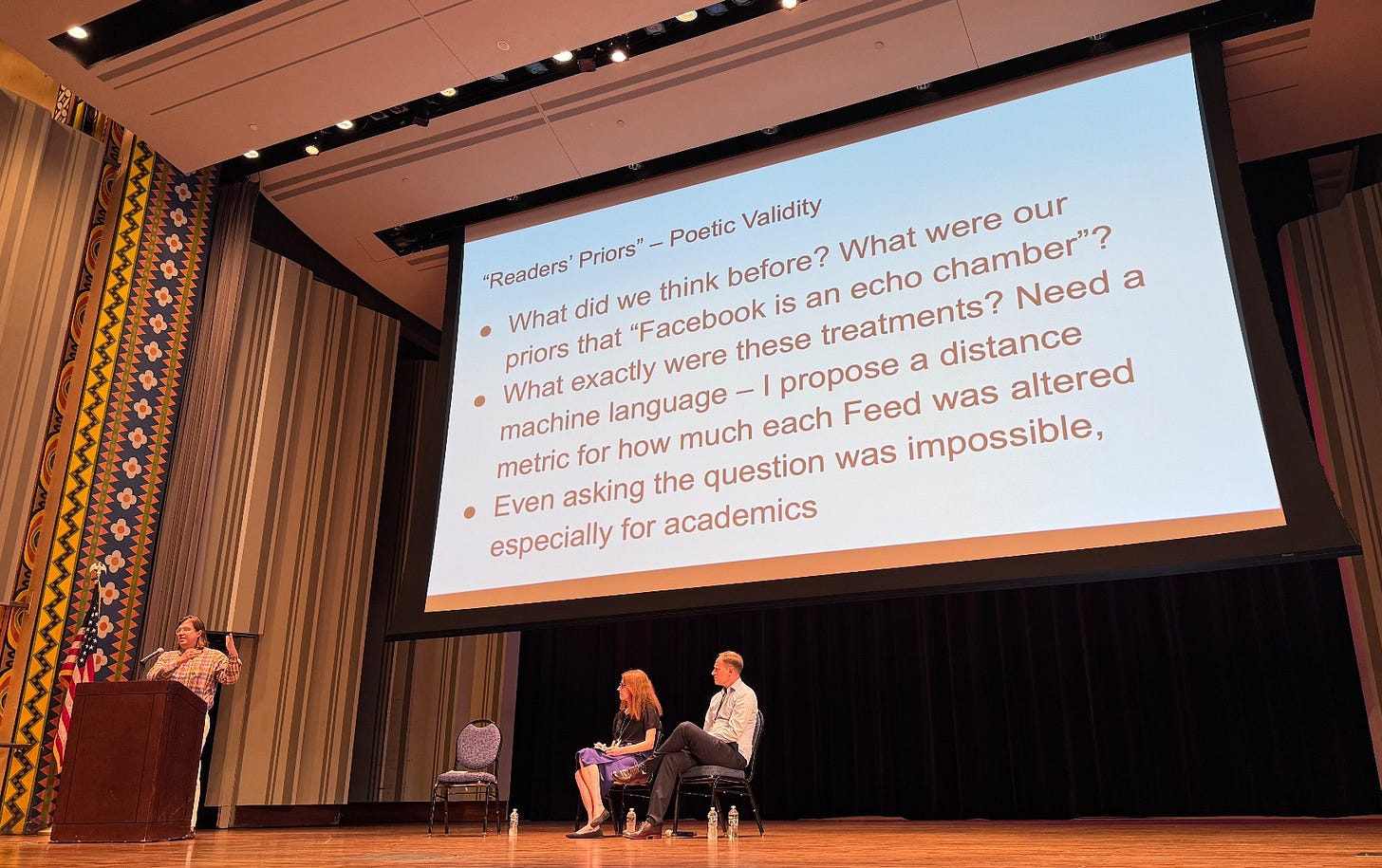

Note: this is the fourth and final post discussing the 2020 Meta Election research partnership and the resulting papers, surrounding a keynote panel at IC2S2 on the topic last Thursday July 18; here are the first, second and third.

I recently conducted a field experiment designed to improve the quality of discussion on political Twitch streams. The experiment was, by standard deductivist criteria, a failure: we didn’t find what we expected, our results had too much uncertainty, and we can’t rigorously distinguish whether this was because our theory was incorrect or because some auxiliary hypothesis (see Ben Recht’s explication of Meehl’s framework) about how to operationalize our experiment turned out to be wrong.

But this is because the standard deductivist criteria are stupid, at least when applied in this context. No one had ever done an experiment like this before; we had no reason to have any confidence in our operationalization. The main thing we “learned,” the majority of the knowledge we produced, was qualitative. We learned how to conduct the experiment — many things that didn’t work, and some that we think might work better next time. Some of what we learned looks quantitative: there were some tricky statistical issues with defining the control group and with estimating the causal effects in specific time windows. But even this was fundamentally about statistical best practices, about how the scientist should interact with the world—in this case, the virtual world of the Twitch stream.

Like my Twitch experiment, the main form of knowledge that the Meta2020 research partnership generated was qualitative. My fear is that this fact is missed because of the high-profile attention paid to the most Science-y deductive outcomes. “The point estimate for the effect of Facebook deactivation on Trump vote is a reduction of 0.026 units (P = 0:015, Q = 0:076)…This effect falls just short of our preregistered significance threshold of Q < 0:05.”

In a previous post, I asked: “So what?” The answer provided by the quoted article is that we update our priors “about the potential effects of social media in the final weeks of high-profile national elections.” But I think that this answer is low in “poetic validity.” In other words: what is “social media”?1

At least the treatment in the desistance experiment is ontologically clear: you stop using Facebook or Instagram entirely. The other three experiments which have been published so far involve a more complicated modification to the architecture of the recommendation algorithm. In each case, the control condition was the standard newsfeed, and the respective treatment conditions were:

A chronological feed, arranging content in the order it was posted

A feed which removed all content which had been reshared

A feed which reduced the amount of content from “like-minded” sources by one-third

Each of these interventions was motivated by existing theoretical concerns about the effects of social media. But what exactly are these treatments?

Here, poetic validity is a serious problem — but the solution is the converse of what I argued about fears about “echo chambers” revealing the fundamental narcissism of social media.

Rather than refining the link between the space of our human-language theory and our scientific hypotheses, we need to go in the opposite direction: from our scientific hypotheses to the machine-language reality of the virtual worlds we’re studying. We need to enter the embedding space. We need to become embedded.

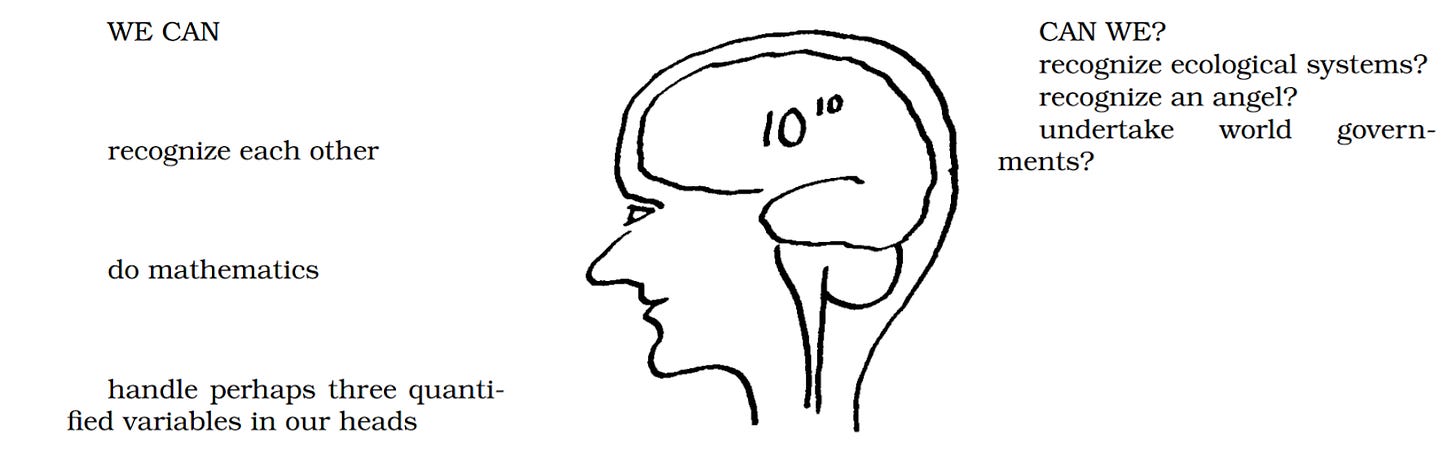

Our human-scale intuitions about the functioning of these virtual worlds tend to be very, very wrong. Their dimensions are simply incommensurate with those of our sensory and computational apparatus, as Stafford Beer’s rough-and-ready calculations illustrate.

In some ways, a virtual social science is easier. While the three-dimensional world is ontologically complex and countless orders of magnitude larger than humans can comprehend, Facebook is ontologically simple. These virtual, controlled environments are literally made up by technology created by humans.

So while the ontology of social media is well-defined, we need to take it seriously. With all due respect to James C. Scott (RIP), we need to work on seeing like a newsfeed.

In order to operationalize the human-language theories (like “content from like-minded sources causes XYZ”), the academics and especially the Facebook research scientists needed to do a massive amount of work to actually understand what was going on at the level of the technical infrastructure. Nobody had ever measured these concepts in real-time and at scale before — let alone intervened on them.

The knowledge of how to actually do these experiments is necessarily qualitative or even tacit; it’s also not particularly useful to anyone who doesn’t work at Meta or have the ability to interact directly with Meta’s architecture. Still, in my view the most valuable output of these studies would be extensive accounts of what was actually done — in human language, in a way that is hopefully interoperable with humans trying to understand and interact with other social media-style systems.

Having heard various iterations of the presentations by the Meta2020 collaborators, I can report that a common refrain about the conduct of the experiment is that they had to “build the plane while flying it”—that they were scrambling to figure out how make everything work, that they didn’t have the luxury of planning things in advance. It’s an “agile” business cliche, but we would benefit by taking it seriously: how did they build the plane? This is the knowledge I really wish the team had prioritized as an output.

In another direction, a major quantitative extension of the existing studies would be to simply re-describe the treatments (and estimate their effects) in machine language. I propose a distance metric for how much each treated newsfeed was altered.

So, each control feed entails a personalized ranking of every piece of content which could be shown: content ranked first is shown first, ranked second is shown second, and so on. Each treatment involves a modified ranking, so that (say) the content ranked second is shown first, and the content ranked fourth is shown second. More generally, here is the control and possible treatments:

Control: 1, 2, 3, 4, 5

Treatment A: 1, 4, 9, 16, 25

Treatment B: 3, 5, 7, 11, 13,

We can then easily compute a distance measure that reduces each treatment to a scalar: the magnitude of the intervention. For example, a measure which sums the square of each pairwise distance:

Distance(Control, Treatment A) = (1-1)^2 + (2-4)^2 +(3-9)^2 +(4-16)^2 +(5-25)^2

= 4 + 36 + 144 + 400 = 584

Distance(Control, Treatment B) = (1-3)^2 + (2-5)^2 +(3-7)^2 +(4-11)^2 +(5-13)^2

= 4 + 9 + 16 + 49 + 64 = 142.

The specific numbers and even the specific measure is unimportant; I’m just gesturing towards how we can think about comparing the magnitude of different newsfeed interventions. Here look I’m literally gesturing towards the idea:

One huge advantage of this approach is that it makes all of the treatments across the three experiments commensurable. Once we have a machine language, we can calculate the effects on our outcomes of interest not in terms of treatments as “theories” but rather in terms of treatments as algorithmic interventions. We could then pool all of the control groups and estimate the pooled treatment effects not as a binary yes/no but rather as a continuous function of the magnitudes of the algorithmic intervention.

Another advantage is that we could descriptively understand what kinds of people received larger or smaller “dosages” (magnitudes) of each metaphorical treatment. For the “like-minded sources” treatment, for example, non-political people might have received a higher “dosage” because removing these sources might have required going much farther down the rank ordering. (Or maybe I’m exactly wrong and non-political people received much lower dosages. How would I know — I don’t speak newsfeed.)

Crucially, we could also see what kind of treatments caused people to change how much they used Facebook or Instagram. This relates to a subtle limitation of these three newsfeed experiments: some of the subjects simply decide to sharply reduce or altogether eliminate their usage of the treated platform. For these subjects, the newsfeed manipulation in fact functions more like the “desistance” experiment. The authors of the “chronological feed” experiment note that this is a common occurrence; people simply stopped using the platform rather than using a chronological feed.

My intuition here is that magnitude of the treatment for the chronological feed is much larger than for the other two newsfeed manipulations. The baseline chronological feed, depending on who (and in particular, which Pages and Groups) a person has chosen to follow, is completely unusable. If you follow only a handful of high-frequency accounts, a chronological feed will be totally dominated by their posts — although your your friend’s baby announcement would be ranked first in the algorithmic feed, it would be drowned out by a few random meme pages or political accounts.

This “distance measure” of the magnitude of the treatments is just a sketch of how we might translate our theoretical expectations into the machine-language world in which the actual operations and observations reside. We could translate the outcomes back to the human-language world inductively, by first identifying the units with the largest dosage/response treatment effects, and then by modelling which of their measured characteristics are most predictive of these larger treatment effect.

It’s worth noting that actual machine learning has been proceeding in this direction for nearly a decade. When I first encountered these methods during my PhD, supervised ML was still dominant. But the explosive growth of unsupervised ML techniques like (word) embeddings has demonstrated their superiority — at least when there’s enough data.

Supervised ML requires human researchers to impose our own human-language categories onto the data; the best supervised ML is simply a replacement for an army of normal humans imposing order on data. Unsupervised ML, however, is genuinely superhuman. It can “see” data in higher dimensions than any human can comprehend. It learns about the structure of the data itself, and develops lower-dimensional representations of the full data — these are machine-language categories, and they are fit-for-purpose. For machine purpose.

A pressing question, for us humans, is how to use these highly learned machines for our purposes. The past two years of playing around with chat gippity have made that clear. I think we’re pretty far from an answer. Indeed, the best uses of LLMs in political science2 have involved reducing them back into massively pre-trained supervised ML! At the end of the day, we’re social scientists, and we need to use human-language categories to make sense of the social world.

That’s well and good when it comes to quantifying how the degree to which politicians support gun control. But my more immediate point is about the alienness of the machine-language categories behind these Meta2020 algorithmic interventions. Social scientists simply don’t understand what the newsfeed is.

So we couldn’t have had very informed priors about what these treatments would cause if we didn’t have the capacity to understand what these treatments are. We can definitely update our priors about the effects of these specific treatments — but it’s far less clear how we should update our priors about the human-language belief that “content from like-minded sources causes polarization” or whatever.

I’ve mostly focused on the Meta2020 experiments in this series. In part this is because they are easier to (trick ourselves into thinking we can) understand — and, relatedly, because there have been four of them published so far, compared to just one of the descriptive papers. Mike Wagner’s excellent commentary makes the observation that “it was faster to analyze the experiments…[than] the nonexperimental papers.”

In the framework I’ve presented above, this is because the experiments were able to avoid a detour through machine knowledge.3 The interventions were conceived in human language, and then bluntly translated them to the Facebook architecture. These implementations only had to be plausible to the relevant actors; no one else can really check their work.

In contrast, the entire purpose of the descriptive papers is to translate the massive machine lifeworlds powering Facebook and Instagram into our language. This still had to be blunt; we define the categories that we care about, and the data has to be compressed into this one specific dimension (in the case of the only paper published so far, the dimension is “ideology”). But this still requires more attention paid to the act of translation — there’s no flashy intervention to distract from this fundamental task.

This leads to my final point about the Meta2020 collaboration.4 If we accept my argument, we should conclude that even asking these big-picture questions about the effects of this or that aspect of social media was impossible, especially for academics without direct access to Facebook’s architecture.

But also — every one of the papers published as part of this collaboration is evidence of something that no one knew before. Not even at Meta. Here’s a fun trick. At the phrase “Meta Didn’t Know” to the beginning of every paper in this series at it becomes a news headline.

Meta Didn’t Know About Asymmetric Ideological Segregation in Exposure to Political News on Facebook

Meta Didn’t Know that Like-Minded Sources on Facebook are Prevalent but Not Polarizing

Meta Didn’t Know How do Social Media Feed Algorithms Affect Attitudes and Behavior in an Election Campaign

No one is keeping track of any of this. Any conspiratorial account of Meta doing this or that political thing is mistaken; it’s far too expensive for them to keep track of anything they’re doing. The true language governing Facebook is the language of capital, the brutally low dimensionality of shareholder value — translated into machine-language through the construction of Key Performance Indicators. The human employees in the middle are simply cogs for aligning the KPIs with $META.

So yes, Meta didn’t know any of this before the research collaboration—the political/regulatory question is whether the should’ve. Whether they should be required to know.

The Meta2020 collaboration was indeed ambitious, and it required an unprecedented financial commitment by Meta to pull off. Millions of dollars and thousands of hours by highly-paid employees — let’s go nuts and say it cost them $25 million.5 This is compared against $134 billion in revenue for 2023. That’s .019% of their annual revenue devoted to this 6-year project.

Is that the right amount? How about compared to drug companies, or car companies? Does Meta know enough about what their product is, or what it does?

There are technical debates about “alignment” in the context of LLMs or AI more generally: how can we make sure that these machines are aligned with our values? The problem is at least partially one of translation: how do we take our human-language values and encode them in the machine language?

We should approach the question of the Facebook and Instagram newsfeeds the same way, rather than the avenue pursued by Meta2020. These one-off, 6-year, human-language inspired newsfeed interventions — with outcomes measured survey responses which are notoriously difficult to move — are indeed the best social science of social media we can plausibly expect to conduct.

And my conclusion is that we need to recognize the limitations of social science. The only way to effectively regulate something like social media is proactively, and empirical, quantitative social science can only ever be reactive.

The idea of Democratic Performance Indicators gives me hives, aesthetically, but it may be a necessary concession to ugly world we’ve allowed the tech companies to create. Only by diversifying the metrics on which recommendation algorithms are evaluated can we make them aligned with something other than shareholder value. The Meta2020 collaboration has demonstrated that this is possible, though expensive — now it’s a political question. Can we afford to be governed by a newsfeed that no one understands?

There are also big problems of temporal and external validity. How did they decide on the relevant reference categories?!? Why should we assume that this study informs our knowledge of future “high-profile national elections” in the US, or of the other “high-profile national elections” being held in 2024 — but, presumably, not “low-profile” or “subnational” elections being held anywhere at any point?

Actually, the best use of LLMs in political science to date has been to tell people to stop doing dumb things with LLMs, like replacing survey respondents with them. While I’m glad that the good people at Political Analysis have come to this conclusion in a way that satisfies their mania for quantification, I believe that moral revulsion is a more straightforward path.

Here I’m inverting the definition of cybernetics presented in Andrew Pickering’s fantastic volume The Cybernetic Brain.

At least until more papers are published!

This doesn’t count the work done by the independent academics, who were not paid in dollars. Their compensation comes in the form of status and career advancement — but most immediately, to academia’s vile Key Performance Indicator, the number of citations on your Google Scholar page.

A technical quibble here: in what way do you see "word embeddings" as unsupervised? They are just computed artifacts based on some other metric of goodness cooked up by humans.

We need more social science of the data engineers.