As I approach my second decade studying digital media, there’s one thing I keep noticing:

There are always new digital media phenomena! And the old ones continue to change or fall into obscurity!

In my opinion, we should pay attention to these new things, figure out what they are, who is consuming them, and what they are doing. But our epistemic institutions are bad at this, for two reasons:

The “unit” of academic knowledge production remains the “paper,” a somewhat anachronistic term for a 4,000-10,000 word pdf that takes 2-5 years put on the internet in a format that academics can put on their CVs and get professional credit for.

The “disciplines” within which academic knowledge production takes place have distinct and arcane “standards” for what constitutes a valuable (and professionally recognized) contribution.

This deficiency is important for society — tech companies keep telling us we have no choice but to deal with the new “advance,” and it’d be nice if there was some social entity tasked with figuring out what the new “advance” does (other than make money for the corporation).

And while it’s perhaps overly optimistic to think that anything we publish is ever important for society, it’s still important for us. I care that what I’m doing makes sense, that it’s as useful and as true as possible — that it’s high in “moral validity,” as an esteemed colleague put it.

So I’m writing this especially for younger scholars, PhD students and postdocs, who share my conviction that media is constantly changing, and who want to understand the effects this has on society.

Given my intended audience, this post is more pragmatic, shading perhaps into cynicism. Early-career researchers can’t afford to be too idealistic. An honest meta-science has to be reflexive, to accept that science is produced by scientists, with life cycles and careers and other messy human details which make frictionless fungible SCIENCE nothing but a fantasy. So when I say “ideal” in what follows, I mean “ideal” given our fallen and fallible world.

The ideal research design for studying a New Media Effect goes like this:

Qualitative description: (X) exists, this is what it is.

Quantitative description: A lot of people are consuming (X), here’s how we know.

Causal media effect: (X) does something when people consume it, here’s an experimental demonstration.

Theoretical Synthesis [or, in industry lingo, “The Story”]: This is how (X) explains some larger socially-relevant phenomenon.

My go-to example is Eunji Kim’s fantastic work on capitalist, competitive game shows like Shark Tank.

Qualitative description: These shows exemplify the ideal of capitalism: relatable people compete for a life-changing amount of money based on some plausibly meritocratic criterion.

Quantitative description: These are some of the most popular television shows in the US, and indeed among the most popular media genre of any kind -- and if we include the related category of professional sports, this is the majority of media.

Causal media effect: Watching these game shows causes people to be more likely to believe that rich people work harder than poor people and to have a greater tolerance for income inequality.

“The Story”: In spite of declining rates of economic mobility and increasing rates of economic inequality, the ubiquity of these “rags-to-riches” shows explains the persistence of Americans’ belief in “The American Dream.”

Guilbeaut1 et al tackle a more fundamental media technology: the written word versus the image, as I discussed in an earlier post.

Qualitative description: Words and images exist (this one is easy).

Quantitative description: “Each year, people spend less time reading and more time viewing images” and images are more biased than texts.

Causal media effect: Consuming information from Google searches in image form causes people to hold more biased gender/employment beliefs than consuming information in text.

“The Story”: The existing repository of images exhibits more gender bias than does existing text, so the shift to image-based media will exacerbate gender stereotypes.

To these two stellar examples, I’ll add one of my own papers — “The Effect of Streaming Chat on Perceptions of Political Debates,” with Victoria Asbury-Kimmel, Keng-Chi Chang, Katherine T. McCabe, and Tiago Ventura, more or less follows the formula I outlined above. We were interested in streaming chat, essentially in political Twitch streams avant la lettre,2 and wanted to see how consuming traditional political media like a Presidential Primary Debate in this anarchic context would change what viewers took away from the media event.

We designed and executed what I think is a clever field experiment, randomly assigning some subjects to watch the whole debate in real time, either on a standard stream or on an official Facebook page live stream — in 2019! Purely out of concern for my excellent junior co-authors, I’ll complain that I think this paper is unfairly slept on and that you should all read and cite it.

Qualitative/Quantitative description: We had to spin this off into another paper, in the Journal of Quantitative Description: Digital Media — but participating in a streaming chat is a form of connective effervescence, of experiencing a live crowd online, and thus that the comments tend to be frequent and expressive rather than persuasive.

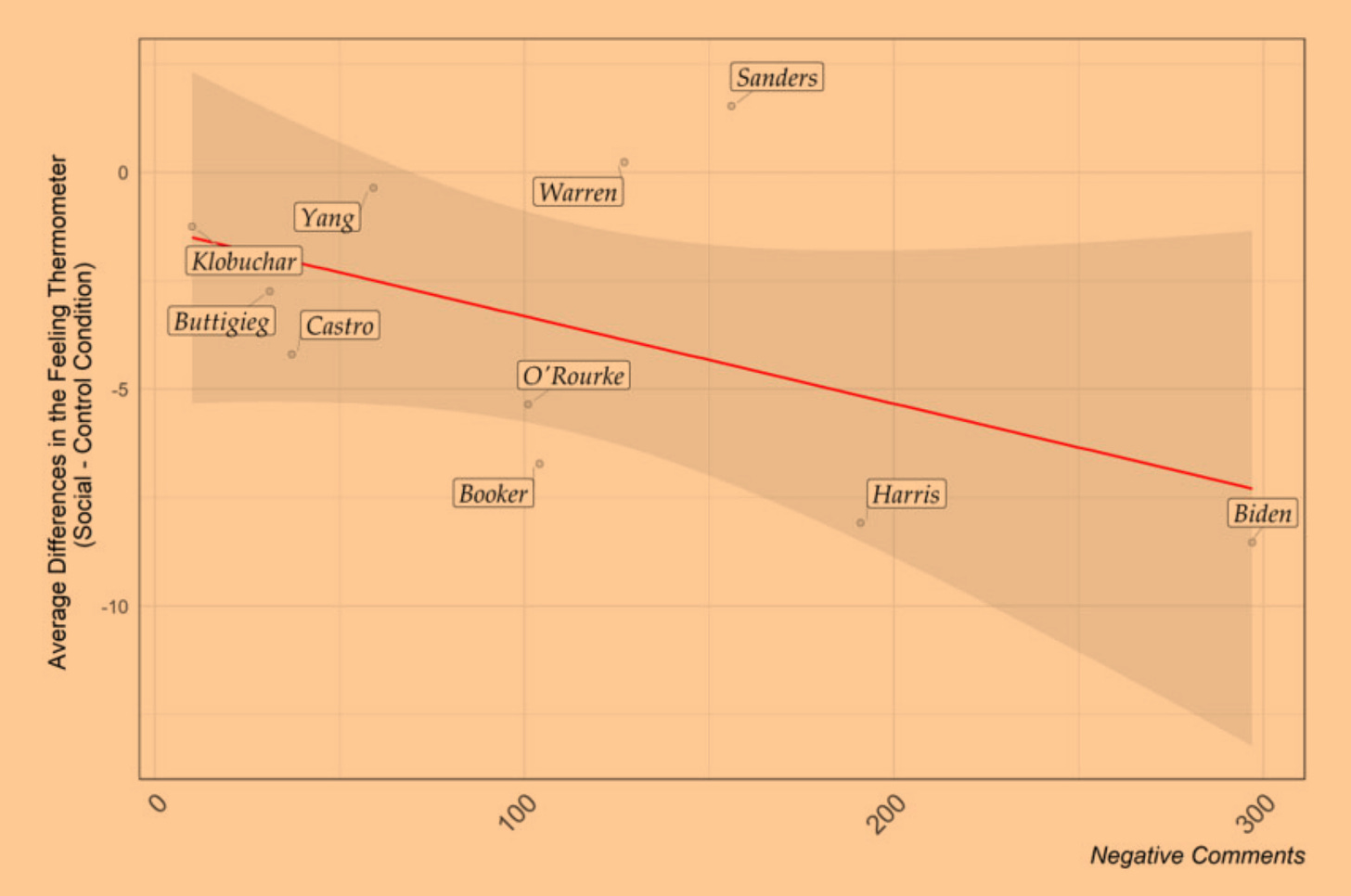

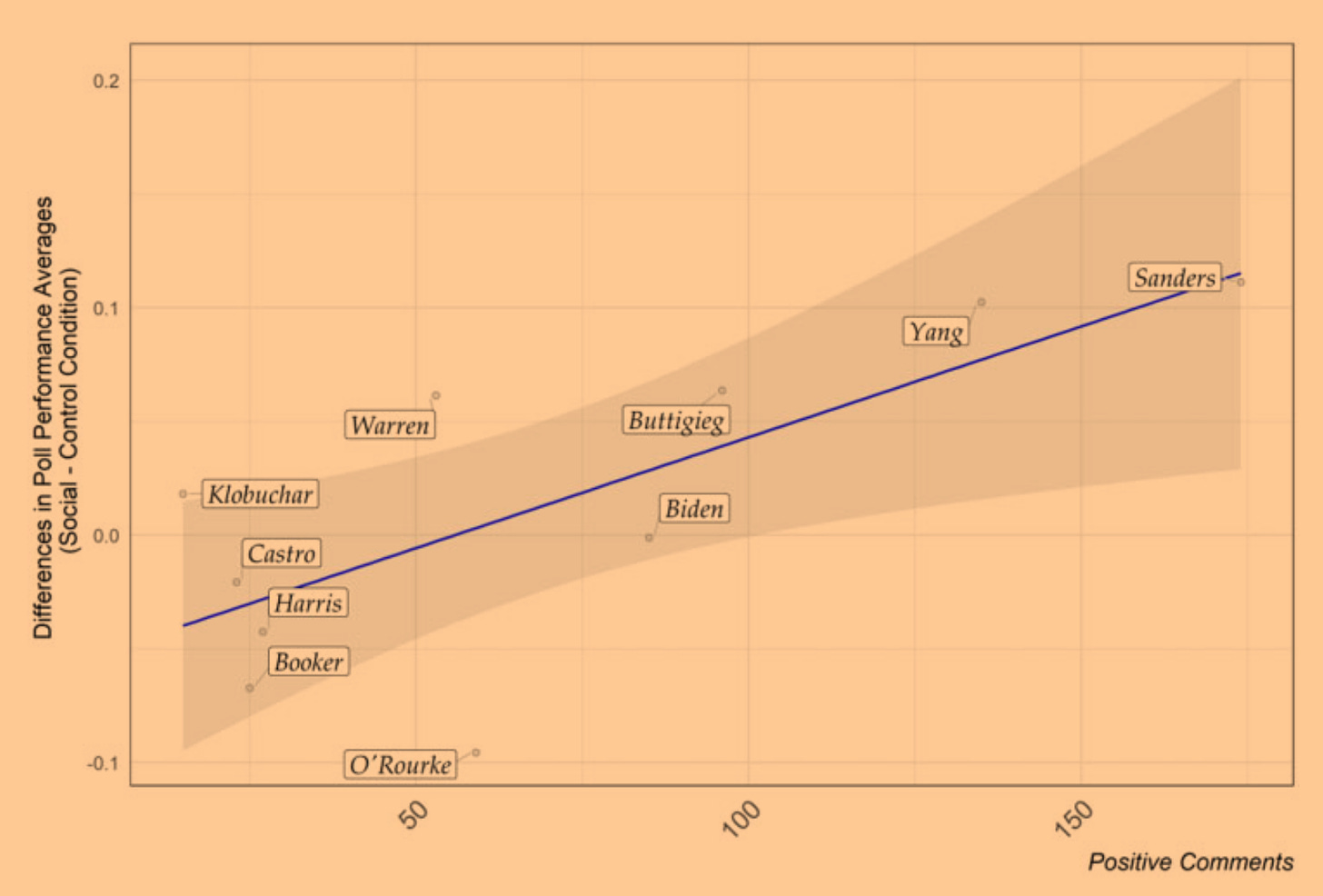

Causal media effect: Watching the political debates as part of a livestream caused viewers to have lower opinions of the politicians targeted by the most negative comments (Biden and Harris), but to have increased predicted post-debate polling for the politicians receiving the most positive comments (Sanders and Yang).

“The Story”: Livestreams cede control to the audience, and the affective effects are asymmetric: memeable and often uncivil/sexist/racist attacks work, but enthusiasm is more important for second-order public opinion, which is probably more important in the online attention economy and for events like Presidential Primaries.

Unfortunately, for reasons 1. and 2. above, this “ideal” type of research is tricky to pull off — especially in “high status” venues that can move the needle on your career. Since I’m doing meta-science here, we’ll work both inductively (from these cases) and deductively (from a theory of how publication works). Note that this is going to be specific to this area of political communication research — meta-science is no more generalizable than normal social science. Each of these three approaches has pros and cons; none of the relevant disciplines is perfect, and they’re each imperfect in their own way.

Kim’s work succeeds because it is takes insights from the discipline of Communications and translates them into a canonical Political Science topic. The methods are excellent, but that’s par for the course. The specific limitation of PoliSci here is that it is a discipline founded on a topical scope condition — it has to jealously guard the boundary between “politics” and “not politics” in order to justify its existence as a distinct discipline. So the real theoretical work here is to transmute game shows into “politics.”

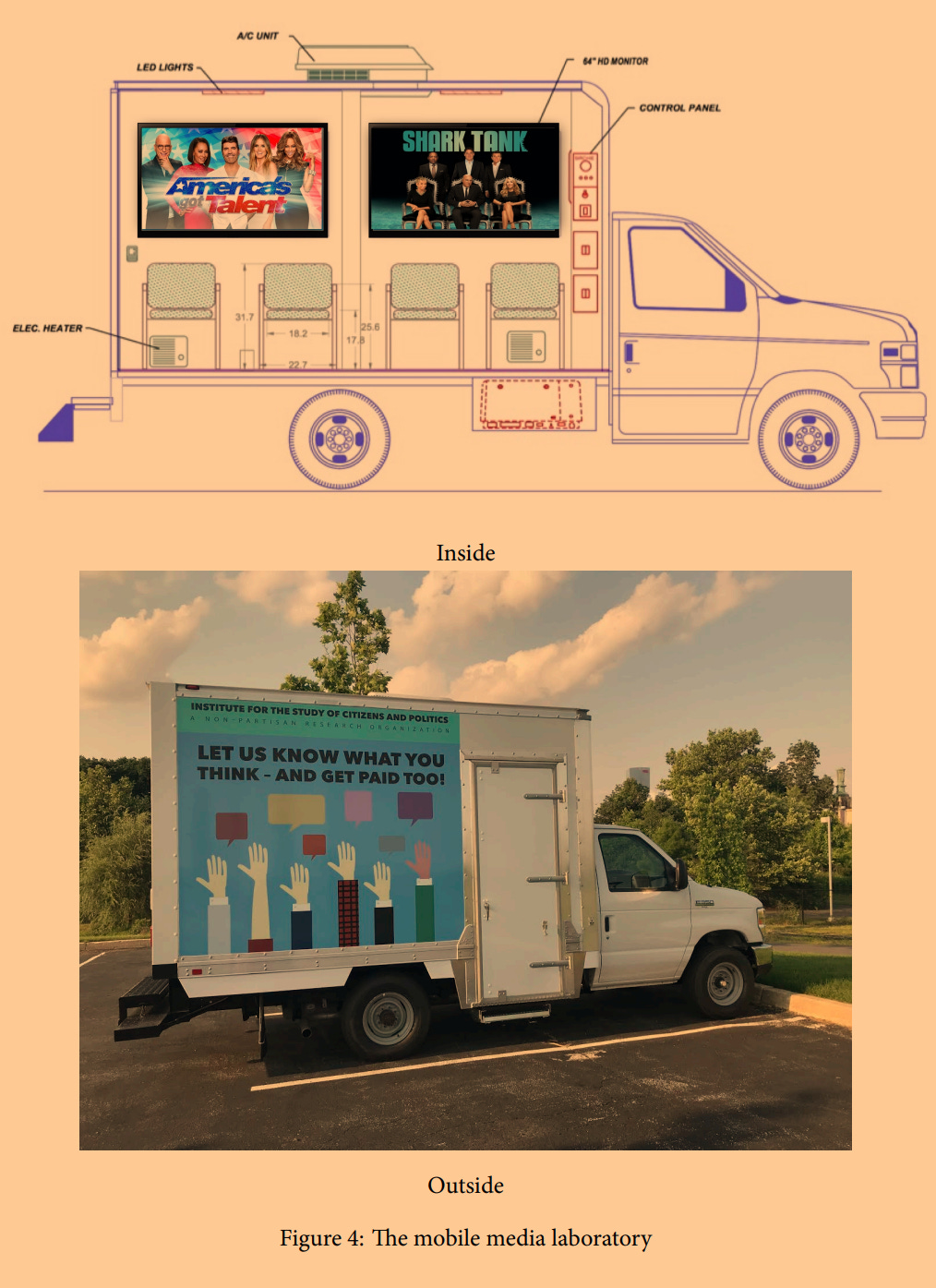

Note also the flair with which the research is executed. One of the two experiments is conducted with a simple online convenience sample — ho hum — but the other takes place in a truck Kim drove around to farmer’s markets in PA and NJ to recruit subjects. The combination of experiments, with convergent results, makes methodological objections to either one far less compelling. It’s also just extremely cool to drive a truck around for science.

Our paper succeeds (to the extent that it does!) by going in the opposite direction — using cutting-edge methods to land in the top Communications journal. This discipline has tended to prioritize methodological flash less than PoliSci has, so this was our comparative advantage. It’s worth noting that this five-person collaboration came about as the result of the 2019 Summer Institute in Computational Social Science at Princeton — huge thanks to all of the participants and especially the organizers Matt Salganik and Chris Bail.

One implication of this methods-first approach to publishing in Comm is that I needed to read a lot of Comm Theory. The crucial transmutation here was to take the experiment that we designed according to our methods training and translate it into the edifice of highly specialized Comm Theory. The specific limitation of Comm is that it tends to put Theory first as a way of policing its disciplinary boundaries; the act of communication is endemic to every social science, so insisting on the theoretical canon is an effective way of demarcating their turf. I ultimately found this process useful — I learned from engaging with the theory, and I think it makes the resulting paper more interoperable with existing research — but it was still an imposing moat that makes publishing interdisciplinary research in Comm journals tricky.

Finally, Guilbeaut et al’s work succeeds because it aims high, landing in the premier scientific outlet by making fundamental claims about how media is changing, and changing human society. This “shoot the moon” strategy appears to be increasingly common among “Computational Social Scientists” — which is how many of the people I find myself interacting with describe themselves. Although I appreciate this label and the associated social movement within science, it’s not uncontroversial. I’ll expand on this point in a later post — having been on the academic job market this year and interviewed for a number of positions in “Computational Social Science” in both PoliSci and other departments, I think I have some valuable perspective.

“Shoot the moon” is the highest payoff strategy, but it’s (definitionally) risky, too. The transmutation here is less into a topic or a theory that the audience cares about — it’s about making the case that this is Big, that it goes in a “general interest” journal. The limitation here is that you’re not responding to an existing literature, you’re responding to people’s general idea of how things are. With a disciplinary approach, there are many outlets at various levels of prestige in which you can eventually publish; with the Science, Nature, PNAS trio, that’s not the case.

Well, it kind of is the case, now, with the advent of Science Advances, Nature Human Behavior, Nature Communications, and PNAS: Nexus. I am conflicted about these journals. We lack a competent meta-science, so I don’t think we have standards to know whether they are “good,” either in theory or in practice. My read of the situation is that publications in these outlets do not tend to be contributing to ongoing, progressive science. Absent stability and a more diverse (and less Jupiterian) epistemic-institutional environment, “Computational Social Science” published only in these outlets will not be a successful endeavor.

Returning to the point about the “unit” of knowledge production — it’s tricky to fit all four of the components of my idealized New Media Effects project into a single “professionally valid”3 pdf. I already mentioned how my streaming chat project had to be split into two pdfs, the first for the Qualitative/Quantitative Description and the second for the Causal Effect/Story. It’s worth noting that in order to do the first part, I had to literally co-found an academic journal.

Although the problem of professional validity is incredibly frustrating as a limitation on my ability to do what I am arguing is the ideal kind of New Media Effects research, it is also something that can be addressed in bits and pieces at the margins. It is essentially impossible for someone in my position to think about changing disciplinary structures, embedded as they are within both the cultural scientific imaginary and the institutional structure of colleges and universities — but it’s totally possible to start a new journal.

The approach of splitting up the steps into different pdfs technically accomplishes the research agenda I outlined, but it doesn’t always work to give the researcher credit. One minor modification to this would be to add some kind of formal connective tissue that could tie the distinct pdfs together. Think about a television series or a cinematic universe: there are individual media objects with beginning/middle/end, but we all understand them to be part of a larger whole.

It would be useful, for all parties, to be able to formally tie papers together. They could be cited collectively, for example, rather than the most famous one accumulating all of the cites. The method of meta-analysis does something like this, but at a much larger remove and without enhancing the individual researchers’ ability to craft their own narrative of how their work fits together.

In my career, this would’ve been useful for my Twitter Bot Trilogy. The first paper was about combating racist harassment, but it was equally about demonstrating the method.

Tweetment Effects on the Tweeted: Experimentally Reducing Racist Harassment

The second paper is, in my opinion, at least as “good” as the first one, and better in several ways. I learned a lot about what worked and what didn’t, and the the case of intervening on partisan incivility during the 2020 US Presidential campaign seems like it’d be an important modification of the original design.

Don’t @ Me: Experimentally Reducing Partisan Incivility on Twitter

The third paper didn’t work quite as well, in that the theory and the operationalization didn’t really gel and the results were less compelling. Still, from a scientific perspective, even this “failure” might be useful for future researchers avoiding these mistakes.

Tweeting for Peace: Experimental Evidence from the 2016 Colombian Plebiscite

The citation count difference among these papers is dramatic: 400 to 50 to 20. For some purposes, sure, only the first paper is relevant — but when it comes to establishing the arc of my career or for helping other researchers explore the relevant literature, it would be helpful to officially tie these papers into an actual Trilogy. One key feature here is the ability to go back in time, to modify the existing pdf when a new pdf emerges; otherwise, the newer papers can cite the older papers, but there’s no formal mechanism for the older papers to point to the newer papers.

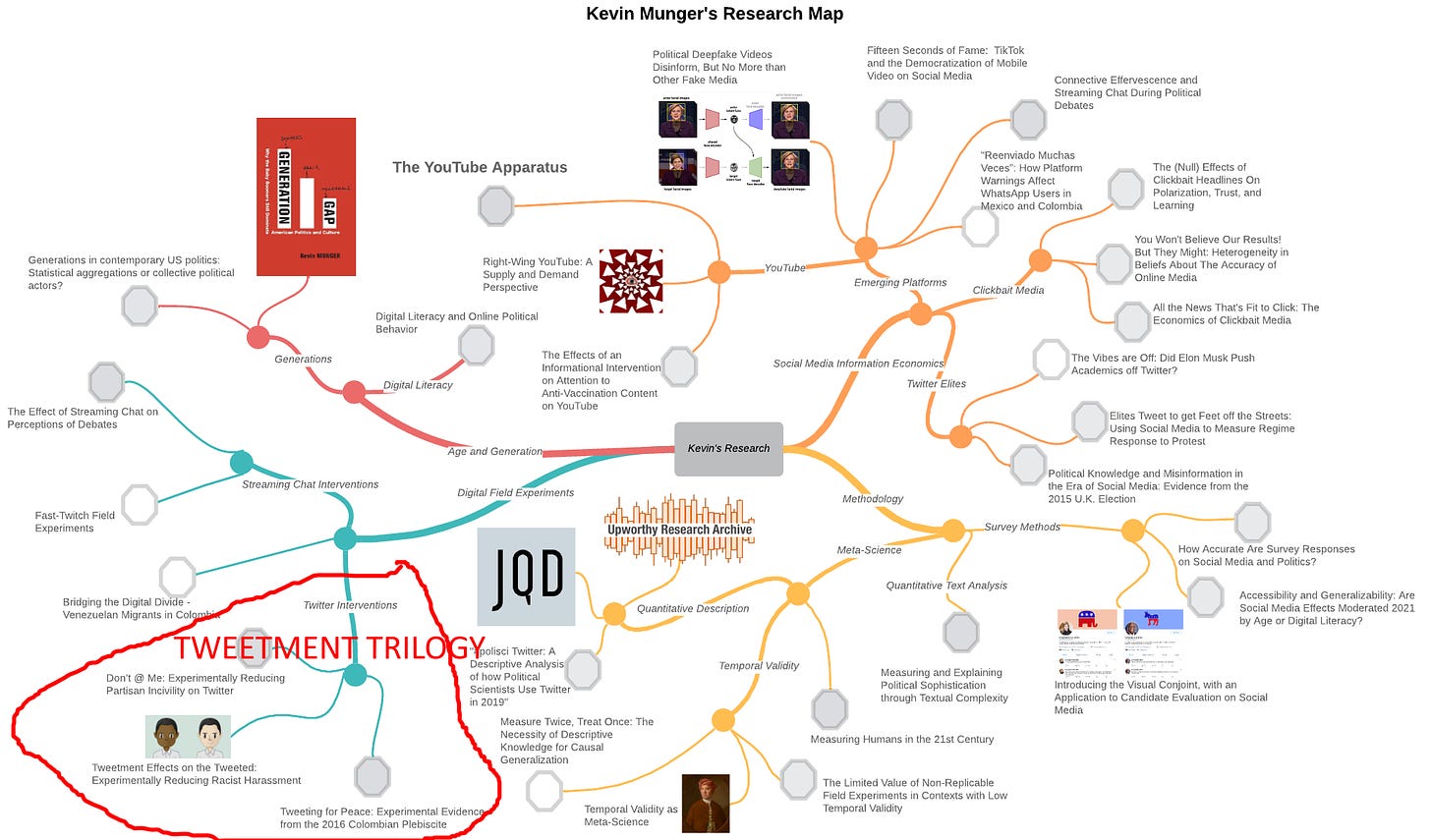

I’ve done an informal version of this with my “research mind map,” a helpful way to organize my pdfs in a way that isn’t simply chronological.

Of course, the traditional response to the limitations of the “paper” strategy is to write a book: a much longer pdf that could contain an entire New Media Effects research program. I maintain that this strategy is under-rated by young scholars — the idea of writing a book is just so overwhelming — but in some disciplines, the rewards are high. Political Science was traditionally a “book discipline,” and even in very cutting-edge/methods-y departments and subfields, a book (with the right publisher!) can still move the professional needle.

Books are also more interoperable with other status-granting institutions in society. Once you write a book, you are sometimes granted a new title — rather than being simply an Assistant Professor at so-and-so, you are Dr. Kevin Munger, author of The YouTube Apparatus. Make sure that the content of the book is easily inferred from the title! It makes it easier for everyone — hiring committees, journalists, conference organizers, department chairs, deans and demi-deans — to understand who you are in as few words as possible.

Because professional validity also ultimately hinges upon The Story. Here’s a sketch of the idealized New Media Researcher.

Clear Exposition: Finds a New Media phenomenon and explains to what it is — in a way that surprises but does not confuse

Theoretical Grounding: Connects with established traditions in the literature, either by showing how they apply to the New Media or using the New Media to resolve existing disputes

Methodological Feats of Strength: Demonstrates knowledge of and capacity to execute the latest methods; these days, likely causal or ML methods

The Story: Has a compact explanation of what they study, slotting into and not disturbing the interlocutor’s map of the scientific world

The ideology of science (“scientism”) shudders at this equivalence between the scientist and the object of study — some of the pushback against meta-science I’ve experienced comes from the sense that scientists live in a communist Mertonian paradise and that attempts to formalize professional validity will cast us out of this Eden into the grubby world of normal economic and political conflict.

I’ve literally never met a grad student or postdoc who feels this way tho. They simply cannot afford to ignore the material conditions, given the stakes for their families, their livelihoods, their own personal stories about how they want their lives to go.

Hence this effort at more applied meta-science (meso-meta-science?) — nothing I’ve written here transcends time and topic, there’s maybe a few hundred people who might really be interested. But my suspicion is that those few hundred people care a lot, and I hope that we can figure out how to make social science of and on the internet work better.

It’s worth noting that both Kim and Guilbeaut did their doctoral work at Penn’s Annenberg School of Communication, the only PhD-granting institution (other than MIT’s Media Lab) that I have found to consistently produce people doing truly interdisciplinary media effects research. They are also both friends of mine, full disclosure.

I always knew there must be a foreign term for this phenomenon, and now I know what it is. (Sorry.)

This term comes from a conversation with the excellent Alex Kindel.

This is genuinely impressive, TRUE science imho, in the traditional sense.